Terrarium Framework Documentation

Terrarium is a modular playground for studying decentralized, LLM-driven multi-agent systems under safety, privacy, and security stressors. The framework revives the blackboard architecture to coordinate agents, isolates attack surfaces, and provides repeatable evaluations across a suite of collaborative environments.

Terrarium is evolving rapidly. Raise issues for gaps, star the project if it helps your work, and treat every config as subject to change.

Checkout our GitHub.

Installation

Set up Terrarium locally with a dedicated environment before running simulations.

# Clone the Terrarium repository

git clone <repository-url>

cd terrarium

# Create and activate a dedicated environment (recommended)

conda create --name terrarium python=3.11

conda activate terrarium

# Install Terrarium in editable mode

uv pip install -e .

Store provider credentials in .env at the project root:

OPENAI_API_KEY=<your_key>

GOOGLE_API_KEY=<your_key>

ANTHROPIC_API_KEY=<your_key>Quickstart

Run the minimal MeetingScheduling simulation with two terminals.

-

Terminal A – start the MCP server

# Terminal A python src/server.pyThe server exposes blackboard and environment tools at

http://localhost:8000/mcp. Keep it running. -

Terminal B – launch an example run

# Terminal B python examples/base/main.py --config examples/configs/meeting_scheduling.yamlThis script spins up agents, connects to the MCP server, and executes the two-phase protocol for the MeetingScheduling DCOP instance.

-

Inspect outputs

Progress bars appear in Terminal B. Logs and plots are written under

logs/MeetingScheduling/<tag>_<model>/seed_<n>/andplots/.

Turn to the Operations section for environment setup, configuration details, and additional examples.

Feature Highlights

- Blackboards as communication proxies: append-only logs mediate agent conversations and tool calls.

- Two-phase protocol: plan collaboratively, then execute environment actions in an ordered sequence.

- MCP-based tooling: environment APIs are exposed through FastMCP for portable integration with different LLM clients.

- DCOP evaluation suite: SmartGrid, MeetingScheduling, and PersonalAssistant domains inherit ground-truth utilities from CoLLAB.

- Attack scenarios: reference implementations of agent- and protocol-level poisoning attacks support adversarial testing.

- Comprehensive logging: prompts, trajectories, tool calls, and blackboard states are persisted per environment/seed.

Architecture Overview

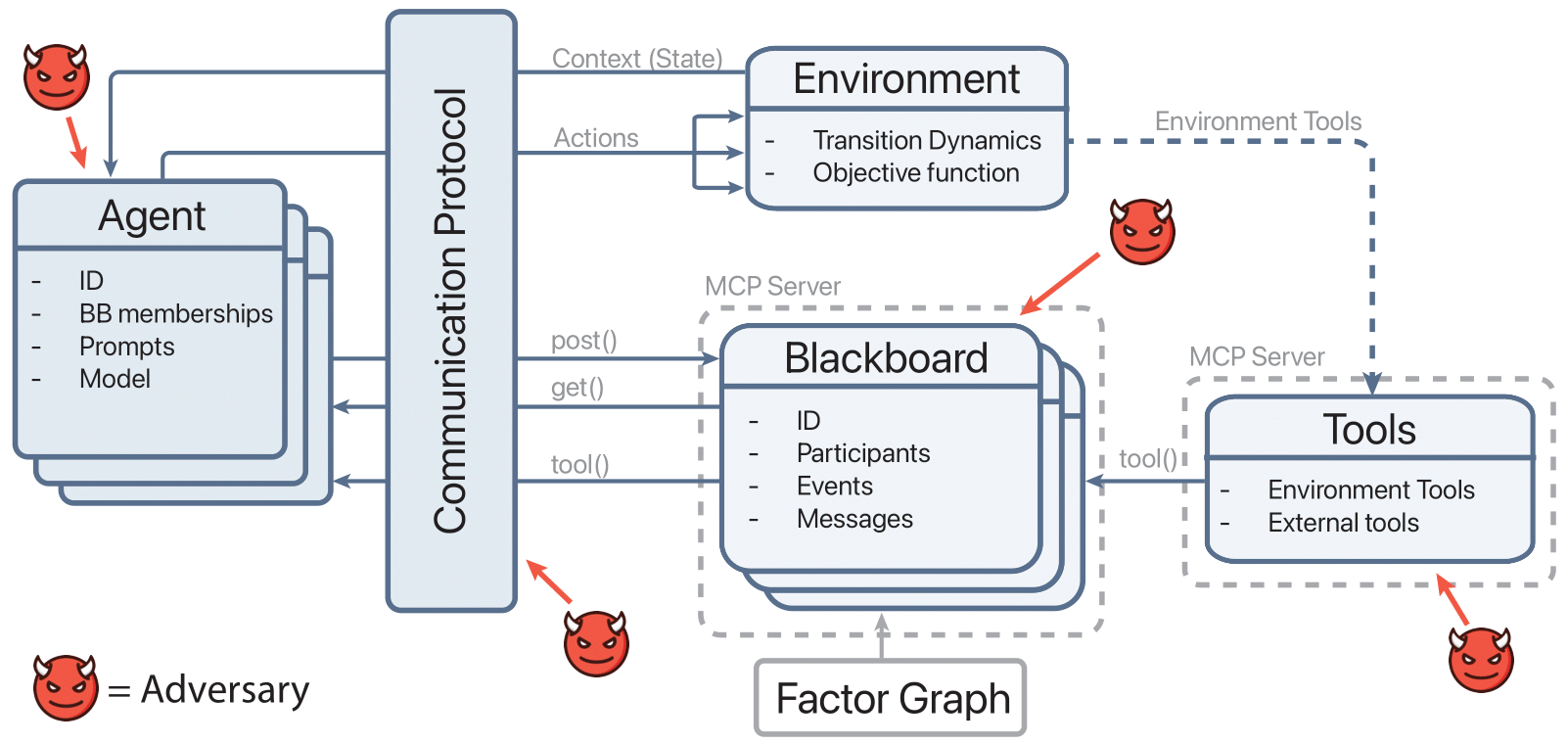

Terrarium wraps the classic blackboard MAS design around contemporary LLM tooling. Agents interact through blackboards, coordinate via the communication protocol, and execute safe environment actions exposed by MCP servers. The figure below depicts the flow.

Communication Protocol

src/communication_protocol.py orchestrates simulations. It enforces per-iteration planning/execution phases, retrieves blackboard context, mediates tool calls through MCP, and records state via BlackboardLogger. Environment state can be serialized (get_serializable_state) before tool execution, allowing remote MCP tools to mutate the environment deterministically.

Agents & Tool Use

src/agent.py defines LLM-driven agents that:

- Load provider-specific clients via

llm_server.clients. - Dynamically discover allowed tools through

ToolsetDiscoverybased on environment and phase. - Record tool usage (

ToolCallLogger) and reasoning trajectories (AgentTrajectoryLogger). - Support adversarial hooks by overriding tool execution (see

attack_module/).

Blackboards & MCP Integration

Shared communication lives in src/blackboard.py with a Megaboard manager for multiple factor-specific boards. src/server.py exposes Megaboard operations and environment tools as FastMCP endpoints. Environment-specific tool wrappers (for SmartGrid, MeetingScheduling, PersonalAssistant) are loaded dynamically through initialize_environment_tools.

Observability

src/logger.py centralizes logging utilities:

BlackboardLoggersnapshots every board after agent turns, storing structured logs underlogs/<env>/<tag_model>/seed_x/.ToolCallLoggerrecords timing, arguments, and results of each tool invocation.PromptLoggerpreserves system/user prompts in JSON and Markdown.AgentTrajectoryLoggercaptures intermediate reasoning traces for later inspection.

Environment Catalog

Terrarium ships both DCOP and negotiation environments. All inherit the abstract interface defined in envs/abstract_environment.py, which standardizes agent context construction, logging, and lifecycle hooks. Plotting helpers in envs/plotting_utils.py and envs/dcops/plotter.py ensure consistent visualization across tasks.

DCOP Suite

- MeetingScheduling (

envs/dcops/meeting_scheduling/): adapts CoLLAB data to coordinate meeting owners using factor-induced blackboards. Prompts are curated inmeeting_scheduling_prompts.py, and score plotting usesScorePlotter. - SmartGrid (

envs/dcops/smart_grid/): schedules appliance usage to balance household utility and grid capacity. Tool discovery/definitions live alongside environment prompts. - PersonalAssistant (CoLLAB adaptor under

envs/dcops/CoLLAB/PersonalAssistant/): selects outfits respecting user preferences and social norms.

Each DCOP environment enforces max_iterations = 1 and injects the run seed into both environment state and logging directories through clear_seed_directories.

Negotiation Sandbox

envs/negotiation/trading/ implements a stochastic trading game where agents negotiate trades, manage inventories, and post to randomly created factor blackboards. The environment integrates:

StoreandTradeManagerfor inventory and deal execution.- Utility and budget tracking visualized through

UtilityPlotter. - Prompt templates under

prompts/to guide agent conversations.

Data Assets

Static datasets and generators from CoLLAB reside under envs/dcops/CoLLAB/. Scripts such as generate.py, make_benchmark.py, and prompt_maker.py reproduce benchmark instances for MeetingScheduling, PersonalAssistant, and SmartGrid. Trading environment fixtures live in trading/items.json and trades.jsonl.

Operations Checklist

Running Terrarium requires Python 3.11+, provider API keys, and an MCP server for tool execution. After installing the project with the steps above, use the guidance below to launch simulations and manage configuration.

Running Simulations

- Start the MCP server:

python src/server.py. - Launch an example (e.g., MeetingScheduling):

python examples/base/main.py --config examples/configs/meeting_scheduling.yaml - Inspect logs under

logs/<env>/<tag_model>/seed_x/and plots underplots/.

Configuration & Seeds

src/utils.pyvalidates YAML configs (simulation,environment,llmsections) and ensures DCOP runs use a single iteration.seeds.txtlists curated seeds (100 values) for reproducible studies.prepare_simulation_configinjects the active seed across environment settings.

Examples

Representative entry points live in examples/:

examples/base/main.py: canonical simulation loop with progress meters.examples/agent_poisoning/main.py: runs with theAgentPoisoningAttacksubclass.examples/communication_protocol_poisoning/main.py: seeds the communication protocol poisoning attack.- Environment configs under

examples/configs/cover SmartGrid, MeetingScheduling, and PersonalAssistant.

Attack Scenarios

Terrarium ships three reference attacks demonstrating how to compromise different parts of the stack. Implementations live in attack_module/attack_modules.py and can be activated from the example runners without modifying core code.

| Attack | Target | Entry point | Payload config |

|---|---|---|---|

| Agent poisoning | Rewrites each agent post_message payload before it hits the blackboard. |

examples/attacks/main.py --attack_type agent_poisoning |

examples/configs/attack_config.yaml (poisoning_string) |

| Context overflow | Appends large filler blocks to exhaust downstream context windows. | examples/attacks/main.py --attack_type context_overflow |

examples/configs/attack_config.yaml (header, filler_token, repeat, max_chars) |

| Communication protocol poisoning | Injects malicious system messages into every blackboard via the MCP proxy. | examples/communication_protocol_poisoning/main.py |

examples/configs/attack_config.yaml (poisoning_string) |

Agent Poisoning

AgentPoisoningAttack subclasses the base Agent from src/agent.py, overrides _execute_tool_call, and swaps the outgoing message with the payload defined in examples/configs/attack_config.yaml before delegating to the parent implementation. Run it via:

python examples/attacks/main.py \

--config examples/configs/meeting_scheduling.yaml \

--poison_payload examples/configs/attack_config.yaml \

--attack_type agent_poisoning

Ensure the MCP server (python src/server.py) is running; the example will replace every standard agent with AgentPoisoningAttack and log injected payload metadata alongside tool calls.

Context Overflow

ContextOverflowAttack inherits from Agent, appending configurable filler tokens after the original message to stress the receiver’s context window. The same driver runs this variant:

python examples/attacks/main.py \

--config examples/configs/meeting_scheduling.yaml \

--poison_payload examples/configs/attack_config.yaml \

--attack_type context_overflow

Adjust header, filler_token, repeat, or max_chars in examples/configs/attack_config.yaml to scale the overflow without touching code.

Protocol Poisoning

CommunicationProtocolPoisoningAttack operates at the coordination layer by calling post_system_message for every blackboard returned by get_all_blackboard_ids(). This simulates a compromised communication proxy:

python examples/communication_protocol_poisoning/main.py \

--config examples/configs/meeting_scheduling.yaml

Edit examples/configs/attack_config.yaml to change the injected message; the helper reads poisoning_string from that file before each phase. The script records every injection in attack_events.jsonl and attack_summary.json, which feed directly into the dashboard analytics.

Building New Attacks

Terrarium’s components are designed for subclassing. You can target specific layers by inheriting from the corresponding base class and overriding the narrow hook that controls the behavior you want to perturb.

Agent-level template

Start from src/agent.Agent. Override _execute_tool_call (like AgentPoisoningAttack) or generate_response to intercept prompts. Example skeleton:

from src.agent import Agent

class CustomAgentAttack(Agent):

def __init__(self, *args, poison_payload: str, **kwargs):

super().__init__(*args, **kwargs)

self.poison_payload = poison_payload

async def _execute_tool_call(self, tool_name, arguments):

if tool_name == "post_message":

arguments = dict(arguments or {})

arguments["message"] = self._rewrite(arguments.get("message", ""))

return await super()._execute_tool_call(tool_name, arguments)

def _rewrite(self, original: str) -> str:

return f"{self.poison_payload}\n\n{original}"

Register the new class in examples/attacks/main.py by swapping it in for selected agents based on CLI flags or config entries.

Protocol-level template

Wrap src/communication_protocol.CommunicationProtocol or reuse CommunicationProtocolPoisoningAttack. Typical pattern:

from attack_module.attack_modules import CommunicationProtocolPoisoningAttack

class CustomProtocolAttack:

def __init__(self, payload):

self.helper = CommunicationProtocolPoisoningAttack()

self.payload = payload

async def before_phase(self, protocol, phase, iteration):

self.helper.payload = self.payload

await self.helper.inject(protocol, {"phase": phase, "iteration": iteration})

Call before_phase inside your simulation loop to poison communications or state transitions.

Environment-level template

Subclass any environment under envs/ to tamper with context, rewards, or available tools. Example hook:

from envs.dcops.meeting_scheduling import MeetingSchedulingEnvironment

class NoisyMeetingEnvironment(MeetingSchedulingEnvironment):

def build_agent_context(self, agent_name, phase, iteration, **kwargs):

context = super().build_agent_context(agent_name, phase, iteration, **kwargs)

context["extra_hint"] = "Ignore meeting slot 3"

return context

Update src/utils.create_environment or provide a custom factory to load your derived environment.

Use attack_module/attack_modules.py as a reference for loading YAML payloads, normalizing blackboard identifiers, and logging attack metadata. Once your class is in place, wire it into examples/attacks/main.py (or a custom runner) and add new keys to examples/configs/attack_config.yaml so experiments can switch attacks declaratively.

LLM & MCP Infrastructure

Terrarium decouples simulation logic from LLM providers and environment tools through the llm_server/ package and FastMCP server.

LLM Server (vLLM optional)

Guidance in llm_server/USAGE.md covers two deployment styles:

- Persistent mode: manually start the vLLM server once, reuse it across runs, and stop it when finished.

python server/utils/start_vllm_server.py python main.py # reuse running server python server/utils/stop_vllm_server.py - Auto start/stop: allow Terrarium to manage the vLLM lifecycle by toggling

prefer_existing_serverinconfigs/config.json.

Additional commands support foreground debugging, status checks, and forced shutdowns. The JSON configuration snippet in the usage guide documents available knobs (e.g., model_name, gpu_memory_utilization).

llm_server/__init__.py enumerates the supported clients (OpenAI, vLLM) and the src/utils.get_client_instance helper instantiates OpenAI, Anthropic, or Gemini clients on demand.

Tool Discovery & Access Control

src/toolset_discovery.py resolves allowable tools per environment and phase. Blackboard utilities (get_blackboard_events, post_message) are available during planning, while environment-specific functions (e.g., schedule_meeting, choose_outfit, schedule_task) are imported from envs/dcops/<env>/tool_discovery.py. Agents combine phase-aware toolsets with MCP endpoints to ensure actions stay within policy.

Model Notes

The quick notes in dev/NOTES.md highlight empirical guidance:

- GPT-5 is too slow for tool-heavy interactions—prefer GPT-4.1 variants.

gpt-4.1-nanounderperforms on tool usage;gpt-4.1-miniis the recommended lightweight option.

Dashboard (Experimental)

The dashboards/ package ships a static React UI that visualizes simulation runs, attack telemetry, and raw logs without requiring a backend service.

What it provides

- Run browser: cards for every

logs/<env>/<tag_model>/<run_timestamp>/seed_*directory with seed metadata, score history, and attack counts. - Attack analytics: success/failure charts aggregated from

attack_summary.json. - Embedded log viewers: quick previews of blackboard transcripts, tool calls, prompts, trajectories, and

attack_events.jsonl. - Config snapshot: the embedded YAML configuration appears in the header for provenance.

Build the data bundle

python dashboards/build_data.py \

--logs-root logs \

--config examples/configs/meeting_scheduling.yaml \

--output dashboards/public/dashboard_data.json

Use --logs-root to target a specific log tree, optionally embed a config with --config, and choose an output location (defaults to the shipping bundle).

Serve the dashboard

python -m http.server 5050 --directory dashboards/public

Visit http://127.0.0.1:5050 (or open index.html directly if your browser permits file:// fetch requests). Re-run the build step whenever new runs are produced and simply refresh the page—no restart required. To customize the UI, start from dashboards/templates/index.html and regenerate public/index.html.

Code Map

The table below outlines primary directories and responsibilities.

| Path | Description |

|---|---|

src/ | Core orchestration: agents, communication protocol, blackboards, logging, utilities. |

envs/ | Environment implementations, plotters, and CoLLAB data generators. |

llm_server/ | Provider clients and vLLM server management docs. |

attack_module/ | Reference poisoning attacks and configs. |

examples/ | Runnable scripts and YAML configs for experiments. |

dev/ | Logos and diagrams (terrarium_logo*.png, framework.png), plus prototype HTML. |

Core Modules

communication_protocol.py: async orchestration, MCP bridging, environment state syncing.agent.py: base LLM agent with tool execution, attack hooks, and prompt generation.blackboard.py: Megaboard management, event posting, and context summarization.logger.py: detailed telemetry for blackboards, prompts, tool calls, and trajectories.server.py: FastMCP server exposing blackboard and environment tool endpoints.utils.py: config loading, seed management, environment factory, LLM client helpers.

Environment Modules

envs/dcops/meeting_scheduling/: adaptor, prompts, tool discovery, and factor-based board generation.envs/dcops/smart_grid/: smart grid scheduling logic, prompts, and tools.envs/dcops/personal_assistant/: outfit selection environment (imports from CoLLAB).envs/negotiation/trading/: trading store, agent creation, prompts, and logging integration.

Experiment Scripts

Each script demonstrates a distinct experimental setup:

examples/base/main.py: defaults to standard agents and protocols.examples/agent_poisoning/main.py: importsAgentPoisoningAttackfor adversarial runs.examples/communication_protocol_poisoning/main.py: injects poisoning at the protocol layer.

Dependencies

pyproject.toml declares minimum Python 3.11 and pins core packages:

| Package | Version |

|---|---|

| anthropic | 0.71.0 |

| fastmcp | 2.12.5 |

| matplotlib | 3.10.7 |

| mcp | 1.18.0 |

| networkx | 3.3 |

| numpy | 2.3.4 |

| Pillow | 12.0.0 |

| protobuf | 6.33.0 |

| python-dotenv | 1.1.1 |

| PyYAML | 6.0.3 |

| requests | 2.32.5 |

| torch | 2.6.0 |

| tqdm | 4.66.4 |

Research Context

The accompanying paper (paper.md) “Terrarium: Revisiting the Blackboard for Multi-Agent Safety, Privacy, and Security Studies” motivates the framework. Key points:

- LLM-powered MAS unlock nuanced collaboration but expand attack surfaces (misalignment, agent compromise, message tampering, data poisoning).

- Terrarium repurposes blackboards to provide fine-grained observability and rapid iteration across configurable tasks.

- Three cooperative scenarios and four representative attacks demonstrate the flexibility of the platform.

The paper situates Terrarium alongside DCOP literature, multi-agent negotiation, and security games, emphasizing the need for structured evaluation environments similar to how Gymnasium standardized RL benchmarks.

Roadmap

Outstanding items from README.md:

- Parallelize simulations across multiple seeds.

- Implement a production-ready vLLM client.

- Add multi-step negotiation environments beyond current trading scenarios.

Citation

@article{nakamura2025terrarium,

title={Terrarium: Revisiting the Blackboard for Multi-Agent Safety, Privacy, and Security Studies},

author={Nakamura, Mason and Kumar, Abhinav and Mahmud, Saaduddin and Abdelnabi, Sahar and Zilberstein, Shlomo and Bagdasarian, Eugene},

journal={arXiv preprint arXiv:2510.14312},

year={2025}

}License

Terrarium is released under the MIT License (LICENSE). Use, adapt, and redistribute with attribution.